xiji2646-netizen

GPT Image 2 + Seedance 2.0 pipeline - what's your experience with the storyboard grid approach?

Been using a two-stage workflow for AI video production that’s been consistently more reliable than text-to-video:

-

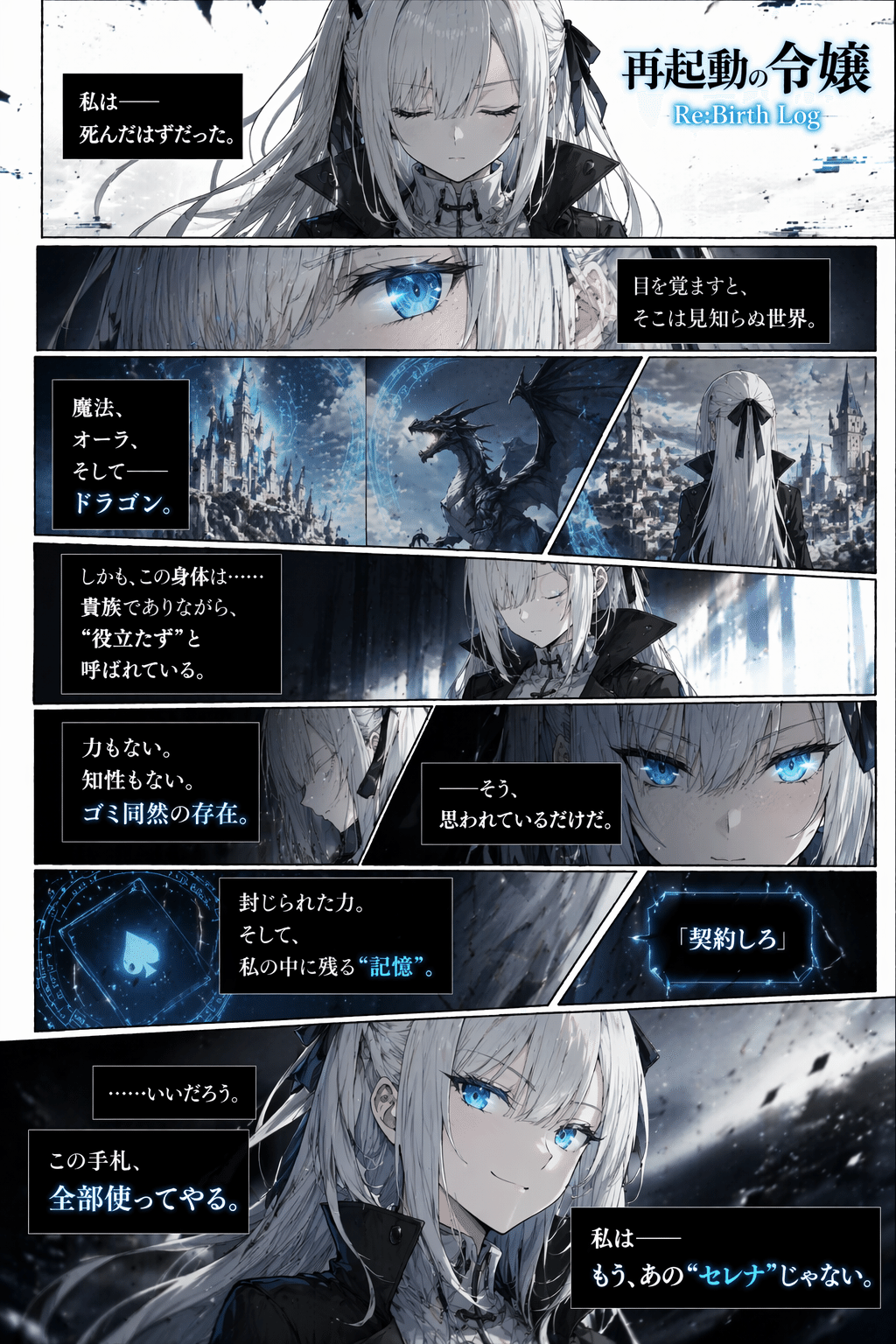

Generate a 3×3 storyboard grid with GPT Image 2 (each panel = one shot)

-

Use that grid as the starting frame for Seedance 2.0 with a shot-by-shot motion prompt

Storyboard grid example

The main advantages over direct text-to-video:

-

Pacing is controlled before you touch the video model

-

Character consistency is much stronger (all shots generated in one unified image)

-

Seedance 2.0 interprets the storyboard as a multi-shot sequence rather than a single drifting clip

For anime-style content, the same principle applies with character sheets and comic pages as the input.

The key insight: final video quality depends heavily on input image quality. GPT Image 2 is very good at producing structured visual assets that work well as video inputs.

Prompt library for storyboard grids, character sheets, and more:

Has anyone tried variations on this? Curious whether 4×4 grids work better for longer pieces, and how you’re handling the motion prompt structure for complex sequences.

Popular Ai topics

Other popular topics

Categories:

Sub Categories:

Popular Portals

- /elixir

- /rust

- /wasm

- /ruby

- /erlang

- /phoenix

- /keyboards

- /python

- /js

- /rails

- /security

- /go

- /swift

- /vim

- /clojure

- /java

- /emacs

- /haskell

- /svelte

- /typescript

- /onivim

- /kotlin

- /c-plus-plus

- /crystal

- /tailwind

- /react

- /gleam

- /ocaml

- /elm

- /flutter

- /vscode

- /ash

- /html

- /deepseek

- /opensuse

- /zig

- /centos

- /php

- /scala

- /react-native

- /lisp

- /sublime-text

- /textmate

- /nixos

- /debian

- /agda

- /django

- /deno

- /kubuntu

- /arch-linux

- /nodejs

- /ubuntu

- /spring

- /revery

- /manjaro

- /lua

- /diversity

- /julia

- /markdown

- /quarkus